Message boards : Graphics cards (GPUs) : GTX 460

| Author | Message |

|---|---|

|

The new GTX 460 could be quite good in terms of performance for gpugrid. Possibly faster than a GTX480 with a little overclock. | |

| ID: 17951 | Rating: 0 | rate:

| |

|

I think you are getting carried away. I expect it will be faster than a GTX285 and a GTX465. It might even come close to a GTX470, especially if you get an 800MHz one, but I doubt it will do more than a GTX480. That said two GTX460's should do more than one GTX480, and cost about the same. | |

| ID: 17956 | Rating: 0 | rate:

| |

|

Maybe we will have to wait for the GTX475 then for a faster performance. | |

| ID: 17957 | Rating: 0 | rate:

| |

|

I was just about to pull the trigger on one of the GTX 460 cards but at the last minute thought to check other hosts running the Fermis. Turns out the GTX 470 is only 10-35% faster (depending on OC) than my GTX 260 and the only GTX 465 I could find is actually slower. Think I'll wait till we see some real results. The price looks good if the performance lives up to the hype though. | |

| ID: 17958 | Rating: 0 | rate:

| |

|

Hopefully the new design will actually work better for GPUGrid than for Folding@Home, but these cards seem to have been introducing more technology rather than trying to improve on existing performances. That said, I agree the architecture is much better, and the cards are more sellable! I’m slightly worried about the shaders, but we wont know for sure how they perform until someone actually buys one, attaches it to GPUGrid and successfully runs a work unit. I think they will be an excellent card to buy for those just wanting one descent card in their system; they look like good value for money. | |

| ID: 17960 | Rating: 0 | rate:

| |

|

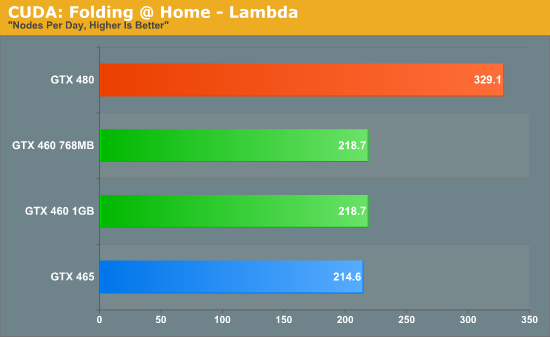

That graph style is Anandtech, but I can't find any GP-GPU stuff in the 2 launch day reviews. Where is it from? I thought it was unsatisfying to bench games only, as there's not much to see there anyway.. | |

| ID: 17963 | Rating: 0 | rate:

| |

|

Looks to be a couple of choices on the 460 ... I am open to all opinions on the following psuedo poll ... I'll get one just because I want to see what it can really do and GDF sounds much to enthused for me not to :-) | |

| ID: 17965 | Rating: 0 | rate:

| |

Looks to be a couple of choices on the 460 ... I am open to all opinions on the following psuedo poll ... I'll get one just because I want to see what it can really do and GDF sounds much to enthused for me not to :-) Contrary to my post above, just ordered the EVGA 768MB Superclocked (763 MHz factory OC). It was $20 more than the stock version but has a lifetime warranty instead of 2 years. Couldn't pass up the price though, under $167 after discounts and Bing cashback. Hope it's faster than my GTX 260 :-) | |

| ID: 17966 | Rating: 0 | rate:

| |

|

We have to test on one to know the performance. I am just saying that it is better designed and should allow higher overclock than the original Fermi. | |

| ID: 17967 | Rating: 0 | rate:

| |

|

There is no doubt it is more competitively designed – designed to sell, and they will, partially because they will overclock well; 800MHz for the GPU should be the standard, but the problem might be the memory controller. I suspect this is not the case and that Beyond made a good choice; nice high factory clock, the less expensive 768MB card, and with a lifetime warranty :) I'm guessing at least 30% faster than a GTX260. In the UK we can pick a GTX460 up for just over £150 - My GTX470 cost more than twice that! | |

| ID: 17971 | Rating: 0 | rate:

| |

|

Don`t worry, I am waiting for two new GTX 460 of 786Mb. One arrive tomorrow, and the second the next week. I will mount a SLI but, during several days I will word with one. | |

| ID: 17973 | Rating: 0 | rate:

| |

|

@SnowCrash: | |

| ID: 17975 | Rating: 0 | rate:

| |

|

I use GTX460-1GB now. But it dose not work well. <core_client_version>6.10.56</core_client_version> http://www.gpugrid.net/result.php?resultid=2669959 http://www.gpugrid.net/result.php?resultid=2672409 I tried the reset of the project, but did not work well. Specifications M/B: ASUS P5E CPU: Inel Xeon X3360 RAM: PC2-6400 2GBx2 GPU: Kuroutoshikou GF-GTX460-E1GHD (made by Sparkle Computer) x2 Driver: FW258.96 OS: Windows XP Pro SP3 x86 | |

| ID: 17987 | Rating: 0 | rate:

| |

|

Doesn't seem that any of the Fermi cards are being correctly identified: | |

| ID: 17989 | Rating: 0 | rate:

| |

|

The core is so different, that it is likely that CUDA3.0 does not work the GTX460. | |

| ID: 17992 | Rating: 0 | rate:

| |

|

I challenged ACEMD beta version v6.32 (cuda31) in GTX460, but after all did not work well. <core_client_version>6.11.1</core_client_version> The BOINC client revised it to 6.11.1. Does the person used GTX460 else for come? As for it, does GPUGRID work well? | |

| ID: 17998 | Rating: 0 | rate:

| |

|

Might be an idea to close Boinc and then open it again (wait for 10sec before opening it). | |

| ID: 18000 | Rating: 0 | rate:

| |

|

My new gtx460 don't work in GPUGRID!!!! | |

| ID: 18002 | Rating: 0 | rate:

| |

|

The 460 came today. | |

| ID: 18004 | Rating: 0 | rate:

| |

Contrary to my post above, just ordered the EVGA 768MB Superclocked (763 MHz factory OC). It was $20 more than the stock version but has a lifetime warranty instead of 2 years. Couldn't pass up the price though, under $167 after discounts and Bing cashback. Hope it's faster than my GTX 260 :-) Received the GTX 460 today. Good & bad news so far, all results at stock factory OC. The good news: The above GTX 460 runs Collatz very well for NVidia. WU time was 14:30 versus 30 minutes for the GTX 260 and 58 minutes for the GT 240, both of which have the shader clocks well over stock. Temps are 53C at full load. As a comparison with ATI my HD 4770 cards average 15:45 per WU. More good news: It runs DNETC well too, 23:52 versus 101 minutes for both my 9600GSO and GT 240 which have the shaders pushed to as high as they will run reliably. The bad news: So far will run neither the GPUGRID Fermi WUs nor the latest beta WUs. Here's the error message for the beta, always the same: <core_client_version>6.10.57</core_client_version> <![CDATA[ <message> - exit code -40 (0xffffffd8) </message> <stderr_txt> # Using device 0 # There is 1 device supporting CUDA # Device 0: "GeForce GTX 460" # Clock rate: 0.81 GHz # Total amount of global memory: 774307840 bytes # Number of multiprocessors: 7 # Number of cores: 56 SWAN : Module load result [.fastfill.cu.] [200] SWAN: FATAL : Module load failed </stderr_txt> | |

| ID: 18005 | Rating: 0 | rate:

| |

|

Although it does not work here yet, it still sounds like a very good card. The GF100's did not work straight out of the box either, so don't fret it. | |

| ID: 18008 | Rating: 0 | rate:

| |

Although it does not work here yet, it still sounds like a very good card. The GF100's did not work straight out of the box either, so don't fret it. > NVIDIA GPU 0: GeForce GTX 460 (driver version 25856, CUDA version 3010, compute capability 2.1, 738MB, 363 GFLOPS peak Notice also it's listed as compute capability 2.1. I think the other Fermis were 2.0. What's the difference? I just stuck the Kill-A-Watt on it and the total system draw is 227 watts running Collatz and 4 CPU projects at 100% with a Phenom 9600BE CPU. | |

| ID: 18010 | Rating: 0 | rate:

| |

Ok, that explains why the current app isn't working on the 460s. We can get that fixed pretty quickly. MJH | |

| ID: 18012 | Rating: 0 | rate:

| |

|

Yeah, the GF100 Fermi's are only CC2.0. I'm guessing the GF104 cards will better exploit CUDA 3.1 and 3.2. | |

| ID: 18013 | Rating: 0 | rate:

| |

I actually stumbled across something useful? What's the difference between 2.0 & 2.1? | |

| ID: 18014 | Rating: 0 | rate:

| |

I actually stumbled across something useful? Yep, ta. What's the difference between 2.0 & 2.1? With Cuda 3.1 it looks likes there's a gnat's crotchet of difference between code targeted for 2.0 and 2.1, but I expect it will change in future compiler releases. Although the ISA likely hasn't changed between the GF100 and 103, with the latter being superscalar, instruction ordering is going to be much more important than on a Fermi and will mean more optimisation work in the compilation. The only reason the current app isn't working is because it doesn't know that the 2.0 Fermi kernels can be used on 2.1 devices. MJH PS Intriguingly, the compiler also accepts 2.2, 2.3 and 3.0 as valid compute capabilities. Make of that what you will. | |

| ID: 18015 | Rating: 0 | rate:

| |

Anyone care to drop the BOINC alpha mailing list a note that their number of multiprocessors are correct, but they have to multiply them by 32 for GF100 and by 48 for GF104 to get the correct number of shaders? Have just done. Also ordered one card (a 768Mb version, factory OC'ed). ____________ BOINC blog | |

| ID: 18017 | Rating: 0 | rate:

| |

|

We will try to give a new application for the 460 on Monday. | |

| ID: 18018 | Rating: 0 | rate:

| |

|

Awesome! Can't wait to try it out. | |

| ID: 18019 | Rating: 0 | rate:

| |

|

@MarkJ: sorry, this incorrect reporting is an issue of GPU-Grid, not BOINC! SK reported this to them yesterday or so.. don't be surprised if they're a little p***** off. Just tell them it was my fault ;) | |

| ID: 18021 | Rating: 0 | rate:

| |

Not having any luck with the 460 on Folding. It is running 3dmark 06 right now so the drivers must be working, correct? The GTX 460 works very nicely in both Collatz & DNETC. @GTX460 & Collatz: don't forget that CC is a comparably light workload, i.e. the cards draw considerably less power here than under GPU-Grid (or Milkyway for ATIs). Just checked DNETC with the Kill-A-Watt. The GTX 460 is now running at 800 core & 1600 shaders, stock voltage (a bump from the 763/1526 factory OC). 239 watts total system draw at 97% GPU. The core/shader speed seem to be locked together at least in MSI Afterburner v1.61 and unlike earlier cards are not allowed to be pushed separately. Not sure if this is a Fermi requirement or a problem in Afterburner. Not a big deal though. Anyway, 14:30 is a nice result! THe 1 GB version may even improve this a bit, as CC loves memory bandwidth. For comparison: my HD4870 takes about 13 mins at 800 / 950 MHz core / mem. That's for 1.28 TFlops and 122 GB/s bandwidth. The GTX460 786 MB weights in at 0.9 TFlops and 86 GB/s. Apparently we're seeing slightly better utilization of the nVidia shaders here. With half a days results to average with the card set to 800/1600, Collatz is now averaging 13:40/WU and DNETC 21:50/WU. Temps still running at 53C. | |

| ID: 18025 | Rating: 0 | rate:

| |

|

Then Fermi cores and shaders are linked for both GF100 and GF104 card alike; we have to overclock both at the same time. | |

| ID: 18026 | Rating: 0 | rate:

| |

Anyway, 14:30 is a nice result! THe 1 GB version may even improve this a bit, as CC loves memory bandwidth. For comparison: my HD4870 takes about 13 mins at 800 / 950 MHz core / mem. That's for 1.28 TFlops and 122 GB/s bandwidth. The GTX460 786 MB weights in at 0.9 TFlops and 86 GB/s. Apparently we're seeing slightly better utilization of the nVidia shaders here. Since I use the optimized Collatz app on my ATI cards, decided to give it a try on the GTX 460. After finding the optimum settings it's now averaged just under 10:58 for the last 20 WUs (with a range of 10:38 - 11:20). GPU use has increased to 99% and temps to 55C - 57C depending on ambient (we're in the middle of a heat wave for us in Minnesota), fan bumps a bit to 44% at 57C. Core/shaders still at 800/1600. Memory is stock (for the Superclocked version) at 1900 according to Afterburner. Total system draw has increased to 247 watts with 4 CPU projects running at 100%. PS is an Antec EarthWatts 380 watt which is considerably less than recommended. | |

| ID: 18035 | Rating: 0 | rate:

| |

|

Keep the memory at stock. If you overclock the memory on the GTX460 it will end up slowing the card down due to increased errors (the controller is almost at its max as is, which is why they used 4000MHZ max. rated RAM rather than 5000MHz)! | |

| ID: 18038 | Rating: 0 | rate:

| |

By the way anyone with a GF100 Fermi should increase the fan speed while crunching. There are 2 reasons: Completely agreed! Think of it like an off shore oil well cap. Dont use one and it leaks everywhere. Only use one a bit and it still leaks, badly. Use a good one the correct way, and you have stemmed the flow as much as you can. I'd rather put it this way: temperature is equivalent to movement of particles, including the atoms (should have fixed positions in your chip) and the free electrons. If it's hotter the latter are more often kicked around randomly and thus they sometimes go where they shouldn't - and that's your leakage. MrS ____________ Scanning for our furry friends since Jan 2002 | |

| ID: 18041 | Rating: 0 | rate:

| |

@MarkJ: sorry, this incorrect reporting is an issue of GPU-Grid, not BOINC! SK reported this to them yesterday or so.. don't be surprised if they're a little p***** off. Just tell them it was my fault ;) Report-back from boinc_alpha mailing list: Tell it to NVIDIA; they don't provide an API for getting the number of cores. I've asked NVIDIA to confirm that all CC 2.1 chips have 48 cores/MP; if this is the case I'll add that logic to the client. Note: this matters only for providing an accurate peak FLOPS message on startup. Everything related to scheduling and credit is determined by the actual performance, not the peak FLOPS. -- David On 17-Jul-2010 2:11 AM, Richard Haselgrove wrote: > The 32x part of that is already hard-coded into the BOINC client, as a fix > for the original GF100-based Fermi crads (GTX 470 and GTX 480) - the > original hard-coded value of 8 for compute capability 1.x cards produced > under-reporting as well. (Changesets 21034, 21036) > > Cards based on the GF104 chip - the GTX 460 released five days ago, and the > GTX 475 due later in Q3, have a shader count of 48 per multiprocessor, so > need yet another hard-coded CC 2.1 test. > > Surely this is a prehistoric way of doing things? We shouldn't have to > change the infrastructure framework every time a new chip is released. > Shader count detection belongs in the NVidia driver and API, not at the > application level. > > Is there any way BOINC itself, and the projects directly affected, can join > together and make representations to NVidia to get their API extended? | |

| ID: 18042 | Rating: 0 | rate:

| |

|

SLI stock 460's; currently running DNETC while waiting for GPUGrid. Cuda 3.1 WU's complete in 13 min (win7 x64.) | |

| ID: 18048 | Rating: 0 | rate:

| |

|

Now it runs on gtx460 with acemdbeta 6.36. | |

| ID: 18053 | Rating: 0 | rate:

| |

|

Fisrt unit ok in a gtx460 | |

| ID: 18065 | Rating: 0 | rate:

| |

Now it runs on gtx460 with acemdbeta 6.36. How does one run "acemdbeta 6.36"? I downloaded some work units and they all failed. They said something about CUDA 30? Does that mean I am running CUDA 3.0? How can you tell? | |

| ID: 18066 | Rating: 0 | rate:

| |

|

You are now picking them up. Just let them run. You have driver 25896, which is good. For some reason you aborted one 6.36 Beta? Anyway hope the next one works for you. | |

| ID: 18068 | Rating: 0 | rate:

| |

SLI stock 460's; currently running DNETC while waiting for GPUGrid. Cuda 3.1 WU's complete in 13 min (win7 x64.) That's running both GPUs in tandem on one WU? They're now running at around 21:50 on my single GTX 460. | |

| ID: 18070 | Rating: 0 | rate:

| |

|

Thats with both GPU's working on one WU, so your output from one card is better than my SLI setup. I'll test it on the grid and see if I should go back to using 2 cards for 2 WU's. | |

| ID: 18074 | Rating: 0 | rate:

| |

|

a question: Are the ACEMD beta version v6.36 a bit so little? my gtx460 do it in 15 min and I can't download more than 1 or 2. I need more units or the biggest units | |

| ID: 18075 | Rating: 0 | rate:

| |

|

The full tasks are now available for your GTX460. They are called, ACEMD2: GPU molecular dynamics v6.11 (cuda31). | |

| ID: 18084 | Rating: 0 | rate:

| |

|

My 2 460's were crunching the new 3.10 app WU's when I last checked them. They were saying ~6 hours for each WU on Win 7. I haven't seen the complete by now so i'm guessing my computer crashed :( | |

| ID: 18087 | Rating: 0 | rate:

| |

|

Is there any way to keep from downloading 3.0 tasks (which won't run) and only download 3.1 tasks? | |

| ID: 18095 | Rating: 0 | rate:

| |

Is there any way to keep from downloading 3.0 tasks (which won't run) and only download 3.1 tasks? No. There use to be, but the techs had to change the setup when Fermi arrived. trn-xs, the best thing you could do is use XP or Linux. If you cant do that then make sure your system has at least one free CPU and use the swan_sync=0 variable. | |

| ID: 18097 | Rating: 0 | rate:

| |

[quote] Thanks, I plan to move these 460's to a dedicated crunching computer with Win XP x64 when I return home. I'm away from my crunchers now and all I have is a Win7 computer :( I've enabled swan_sync=0 and left 2 threads open. Unfortunately once I added my 460's my system has become unstable. I'm not quite sure why, I've reinstalled Nvidia reference drivers and upped my fan speed to 70% with no overclock. Its not just a GPUgrid, I had crashes with DNETC also. | |

| ID: 18100 | Rating: 0 | rate:

| |

Is there any way to keep from downloading 3.0 tasks (which won't run) and only download 3.1 tasks? If you a fermi and a 3.1 driver you should be downloading only 3.1 tasks. Isn't it the case? gdf | |

| ID: 18102 | Rating: 0 | rate:

| |

|

trn-xs now has driver 25896 on Win7 x64 and is using a 6 core i7. | |

| ID: 18106 | Rating: 0 | rate:

| |

trn-xs now has driver 25896 on Win7 x64 and is using a 6 core i7... Excellent detective work SK! I was having lots of stability issues, I've pulled my 2nd GTX460 and so far so good. All the restarting was due to the crashing, and the cuda 3.1 non beta WU that failed was due to crashing also. Also, as soon as the Cuda 3.1 app went into production I never received another 3.0 WU. I think you may be right about the amps on the PSU. I have a Corsair HX850 running a mildly overclocked 980x, was trying to run 2 gtx460's, 4 hds, 1 ssd. Wattage wise that should draw ~550 under load. CPU usage didn't seem to affect my crashing, under full load or idle I would still lock up. I'm also running on 220v (if that matters for internal computer amperage?) *Edit, HX850 has 70A on the 12v rail, that ought to be about double what is needed to run 2 gtx460's. | |

| ID: 18112 | Rating: 0 | rate:

| |

|

It's possible that one of the cards is error prone, or is just running too hot, but it is more likely that the buildup of heat in the case is causing overheating on other components. Did you check the card, case and CPU temperatures? | |

| ID: 18114 | Rating: 0 | rate:

| |

Is there any way to keep from downloading 3.0 tasks (which won't run) and only download 3.1 tasks? The tasks seem to working correctly now. When the small sized (187 point) beta work units first came out the GTX-460 computer #75987 was downloading both the CUDA 3.1 beta tasks and CUDA 3.0 work units as well. The CUDA 3.0 tasks would fail straight away. Take a look: http://www.gpugrid.net/results.php?hostid=75987 Anyhow the tasks seem to be working fine now... | |

| ID: 18119 | Rating: 0 | rate:

| |

That graph style is Anandtech, but I can't find any GP-GPU stuff in the 2 launch day reviews. Where is it from? I thought it was unsatisfying to bench games only, as there's not much to see there anyway.. http://www.anandtech.com/show/3809/nvidias-geforce-gtx-460-the-200-king/16 | |

| ID: 18120 | Rating: 0 | rate:

| |

|

Switched cards around so now the GTX 460 is running in XP64. GPU utilization went up from 49% to 95%. Times look to be much faster than before but still slower than my GTX 260. One would think that a more advanced 336 shader card should be faster than an old 216 shader card. The GTX 460 is also looking to be less than twice as fast as the 96 shader GT 240. Think there's still much work to do on the new app. | |

| ID: 18121 | Rating: 0 | rate:

| |

Switched cards around so now the GTX 460 is running in XP64. GPU utilization went up from 49% to 95%. Times look to be much faster than before but still slower than my GTX 260. One would think that a more advanced 336 shader card should be faster than an old 216 shader card. The GTX 460 is also looking to be less than twice as fast as the 96 shader GT 240. Think there's still much work to do on the new app. This is interesting information. We suffer the fact that multiprocessors went down from G200 to GF100/104. It is probably the same problem that we have with ATI cards, very fat multiprocessors. gdf | |

| ID: 18122 | Rating: 0 | rate:

| |

Switched cards around so now the GTX 460 is running in XP64. GPU utilization went up from 49% to 95%. Times look to be much faster than before but still slower than my GTX 260. One would think that a more advanced 336 shader card should be faster than an old 216 shader card. The GTX 460 is also looking to be less than twice as fast as the 96 shader GT 240. Think there's still much work to do on the new app. What a leap in GPU utilization! Do you think the GTX470 might get the same kick in performance as the GTX460 if running XP 64bit? Skgiven said he had an XP 64bit somewhere & he has a GTX470 but didn't think that it was worth giving a try if he had to get more RAM & possibly a new license. How much RAM do you have & what CPU was used? ____________  | |

| ID: 18124 | Rating: 0 | rate:

| |

What a leap in GPU utilization! Do you think the GTX470 might get the same kick in performance as the GTX460 if running XP 64bit? Skgiven said he had an XP 64bit somewhere & he has a GTX470 but didn't think that it was worth giving a try if he had to get more RAM & possibly a new license. How much RAM do you have & what CPU was used? I'm running XP 32-bit and it seems to be working at about the same speed as XP 64-bit. 15,913.17 seconds for a GTX-460 to complete a 4,535.61 / 6,803.41 point work unit on an old AMD 4000+ system. As I recall XP-64 bit was a pain in the neck (at least it was when it first came out), YMMV. | |

| ID: 18127 | Rating: 0 | rate:

| |

|

Bigtuna, your GTX 460 with 768MB using the 25896 driver and XP x86, is doing quite well for some tasks: | |

| ID: 18129 | Rating: 0 | rate:

| |

|

Hi Bigtuna, | |

| ID: 18130 | Rating: 0 | rate:

| |

Hi Bigtuna, Failed work units? So far the only failed work units have been the incompatible CUDA 3.0 work units that got sent during the beta testing. Full sized CUDA 3.1 tasks have been rock solid AFAIK. Of course they have only been running a day or two so that isn't much of a test. Are you also using the swan_sync environmental variable, and do you have a core free? That computer is running swan_sync=0 currently. Turned it "on" after the first couple full sized tasks. You can tell by the CPU time. With swan_sync "off" CPU time is minimal and with swan_sync=0 CPU time is about the same as the the GPU time, and yes there is a free core available on that box. Did we ever decide exactly what swan_sync was? The card came with a factory OC to 763/1526. So far I'm impressed and disappointed at the same time. The 460 runs cool and quiet which is good but the performance is only about 2.5 x GT-240 but the price is 4 x GT-240. | |

| ID: 18131 | Rating: 0 | rate:

| |

|

The good news is I think I have figured out my gtx460 problems; bad news is I have one defective card that is prone to crashing. Running them both 1 at a time trouble shoot the issue. | |

| ID: 18132 | Rating: 0 | rate:

| |

Did we ever decide exactly what swan_sync was? It is an environmental (system) variable used to synchronise the GPUGrid application to the CPU. I originally thought it linked the app specifically to a core but I now think it forces the operating system to continuously poll the app, allowing the app to have immediate CPU resources when required (removing a bottleneck). I expect the number zero causes continuous polling, whereas if a value of 5 was used for example it would only poll every 5 sec. So far I'm impressed and disappointed at the same time. The 460 runs cool and quiet which is good but the performance is only about 2.5 x GT-240 but the price is 4 x GT-240. This is the first app that actually works for the new GTX460 cards. There has been no time to look at the GF104 architecture in greater detail, and then refine the apps and tasks to suit the card. It may still have significant unrealised potential. The latest application v6.11 also improved performance for the first Fermi's (GF100), so hopefully an improved app will do the same for the GF104 cards in due course. The 6.11 app & latest driver combo also improves the Vista/W7 performance dramatically. Vista and Win 7 still perform slower than XP and Linux, but now do so to a similar extent, across all cards (they are equally slower, roughly speaking). Prior to the latest app the performance of Fermi's on Win7 and Vista was almost half what it is on XP. It's now only about 10 to 15% slower, on properly configured systems. As for price, that will drop over time in the same way the GF100 Fermi's dropped in price: My GTX470 cost £320, now it can be picked up for £277, and there are cheaper cards. You are also comparing the new GTX460 (2 weeks old) to a GT240 card that has dropped by some 40% since its release. People should not be too surprised about the present performance given the shader layout; it's totally different than for the GF100 cards. Basically its performing as if it has 2/3rds the number of shaders, due to the way these are accessed. I doubt that the present app can tap into these last 33% of shaders efficiently, if at all. So perhaps there is good room for improvement. trn-xs, take the faulty card back and get a refund or a replacement. | |

| ID: 18135 | Rating: 0 | rate:

| |

Did we ever decide exactly what swan_sync was? It controls the method that ACEMD tells the CUDA runtime to use for polling for GPU work completion. The default method is for the application to block until completion; this keeps CPU load to a minimum but introduces latency that slows the program down. Setting SWAN_SYNC=0 will cause ACEMD to poll for kernel completion, which minimises latency at the cost of CPU. 0 is the only valid value for SWAN_SYNC - anything else will cause undefined behaviour, so don't do it! The 460 runs cool and quiet which is good but the performance is only about 2.5 x GT-240 but the price is 4 x GT-240. We will be turning our attention to improving the performance on GF104 cards after the summer vacations. We know what needs to be done. MJH | |

| ID: 18136 | Rating: 0 | rate:

| |

|

Well mine arrived today. I have swapped out a GTX275 for it and its off and running. | |

| ID: 18145 | Rating: 0 | rate:

| |

|

Well initial performance is actually worse than the GTX275. As MJH said they need to work on the app. I'd say GPU-Z needs an update too. | |

| ID: 18151 | Rating: 0 | rate:

| |

|

Its a bit early to clearly tell here, but it looks like my 460 is out performing my 280 so far. Its not a comparison because my 280 is running old drivers and the 460 is OC'd to 750/1500. | |

| ID: 18152 | Rating: 0 | rate:

| |

|

Kill-a-Watt power info for the GTX-460. I've got the 768 MB version with a factory OC to 763/1526 MHz. | |

| ID: 18154 | Rating: 0 | rate:

| |

|

I think it would be generically more accurate to compare the GTX460 768MB with the GTX260-192, and compare the GTX460 1GB with the GTX260-216: | |

| ID: 18155 | Rating: 0 | rate:

| |

Well initial performance is actually worse than the GTX275. As MJH said they need to work on the app. I'd say GPU-Z needs an update too. Worse performance also than the GTX 260. I bought the GTX 460 specifically for GPUGRID but can't justify using it here until the performance improves. The good news is that it runs extremely well in Collatz and DNETC. @bigtuna: What was the percentage of GPU usage for your Kill-A-Watt readings? @sk: Wow, just when it seemed "silliest and most irrelevant post of the month" was wrapped up. It would be nice to stick to reality instead of speculation based on fantasy and misinformation. BTW, the 768MB and 1GB models of the GTX 460 perform the same in Folding, Collatz and DNETC. Collatz is an app that is very much affected by memory speed. I think we know the GTX 460 isn't being utilized well by GPUGRID. Hopefully that will improve. _______________________ Tried running the GTX 460 in XP64 with swan_sync set and 1 core reserved for the GPU (GX780 quad core system). The result was no significant speed increase in GPUGRID (1 minute or so) and loss of 1 CPU core in another project. In addition the system became very slow to respond. Without swan_sync the system response was fine. | |

| ID: 18156 | Rating: 0 | rate:

| |

|

The shader speed is more important here than the RAM speed. Besides, the actual speed of the GDDR5 is not the issue with the 768MB card versions, the issue is the memory interface! | |

| ID: 18157 | Rating: 0 | rate:

| |

GPUZ reports between 75 and 90 percent GPU load (it varies and so does the power). | |

| ID: 18158 | Rating: 0 | rate:

| |

Many games also observe this 10% reduction in performance between the 1GB and 768MB versions. Exaggeration and far too many exclamation points doesn't provide credibility. Even your own cherry-picked article doesn't show anywhere near 10% improvement in games. As you can see by the Anandtech test that you posted here, there is no difference between the 2 models at all in Folding: http://www.gpugrid.net/forum_thread.php?id=2227&nowrap=true#17956 As I'm sure you know the 1GB card also uses a higher stock voltage and thus uses more power, runs at higher temps and is generally less overclockable compared to the 768MB model even at it's lower stock voltage (again, shown in the Anandtech article where you grabbed the Folding chart above). The truth is results vary depending on the application. Most DC apps are not memory constricted. A few are. Exclaiming something else doesn't make it true. | |

| ID: 18159 | Rating: 0 | rate:

| |

Did we ever decide exactly what swan_sync was? Awesome, thanks for the info. SWAN_SYNC is working great for me: The first work unit I ran was with SWAN_SYNC "off". It took 17,150 seconds for a 6,803 point task. With SWAN_SYNC "on" (SWAN_SYNC=0) similar tasks are taking about 15,850 seconds which is about an 8% difference. The difference is significant enough that I'm leaving SWAN_SYNC "on". Different operating systems and different hardware could react differently. Running those 6803 point tasks (larger tasks have been a bit slower) the GTX-460 is good for about 37,083 points per day with SWAN_SYNC=0. With SWAN_SYNC "off" the unit would pull down 34,272 points per day so the one sacrificed CPU core is good for about 2.8k points per day. 2.8k/day is more points that that one core would pull down running Rosetta. NOTE: Edited to have SWAN_SYNC in caps which seems to be required for at least some Linux distros. | |

| ID: 18161 | Rating: 0 | rate:

| |

|

I don't think the 1Gb cards are going to provide any better bang for the buck. | |

| ID: 18162 | Rating: 0 | rate:

| |

But it's less than 1 core can get in Aqua@home hence why i keep my gtx 260 without swan_sync. | |

| ID: 18163 | Rating: 0 | rate:

| |

Same here, and CPU credits aren't exactly comparable to GPU credits. Some projects can only be run on the CPU and a core on those projects means a lot. On the other hand lets compare credits between the GTX 260 and GTX 460. My GTX 260 does around 40,000 credits/day in GPUGRID and about the same or even a bit less in Collatz. On the other hand my GTX 460 does around 35,000 credits/day in GPUGRID and about 103,000 credits/day in Collatz without sacrificing a CPU core. Quite a difference... | |

| ID: 18164 | Rating: 0 | rate:

| |

|

IMO the only way as not to discriminate or favoritise projects, is if BOINC Projects can agree to give credits based on CPU/GPU performance per watt & the CPU/GPU time used on WU's x deadline bonus. Is this even possible though??? | |

| ID: 18165 | Rating: 0 | rate:

| |

We are working on optimizing the application even further. In September some news. gdf | |

| ID: 18168 | Rating: 0 | rate:

| |

Will the other Fermi cards have performance benefit from this optimization, or just the GF104 based ones? | |

| ID: 18169 | Rating: 0 | rate:

| |

|

If it works, I would expect that all of them will benefit even g200 cards, but GF100 more and GF104 even more. | |

| ID: 18170 | Rating: 0 | rate:

| |

IMO the only way as not to discriminate or favoritise projects, is if BOINC Projects can agree to give credits based on CPU/GPU performance per watt & the CPU/GPU time used on WU's x deadline bonus. Is this even possible though??? Good idea. Thing is the more credit a project gives the more likely crunchers concerned mostly about points will crunch for said project. That gives projects a motive to hand out more points. Personally I'm not concerned about cross project points, only points within my favorite projects, and only because the points represent a relative level of contribution. More points equals a bigger contribution. | |

| ID: 18172 | Rating: 0 | rate:

| |

If it works, I would expect that all of them will benefit even g200 cards, but GF100 more and GF104 even more. This sounds really promising. Looking forward to it and thanks for all the hard work. | |

| ID: 18178 | Rating: 0 | rate:

| |

|

i jusr read the topic quickly. correct me if i'm wrong, but if i've got OC'd GTX275 there is no sense to buy GTX460? | |

| ID: 18190 | Rating: 0 | rate:

| |

|

as MarkJ said: Well initial performance is actually worse than the GTX275. ok, it's clear for me - no rush at all :-) ____________  | |

| ID: 18191 | Rating: 0 | rate:

| |

|

It wouldn't hurt Nvidia if you bought a GTX460, & I don't think GPUGRID would complain either. Personally I'm happy when others buy things fast, expensive, & help to make it cheap & mature for me when I feel like going for it myself. | |

| ID: 18192 | Rating: 0 | rate:

| |

|

i'm trying to be smart :-) if i see no reasons to do smth - i will not do that. | |

| ID: 18193 | Rating: 0 | rate:

| |

|

CTAPbIi, at present your overclocked GTX275 is supported by mature drivers and 2 years of application development. It outperforms most GTX460's by about 25%. The GTX460 has only been released and will take time to optimise, after the summer holidays. | |

| ID: 18195 | Rating: 0 | rate:

| |

|

My general advice is: don't rush to buy anything, sit back and remember it's only a hobby after all. But if you see the opportune moment - go for it (e.g. some relative or friend needs a new card anyway). And if you buy go for best bang for the buck - but also remember that you only have so many PCIe slots and that running a PC to feed the GPU also costs power. I'd rather have 1 24/7 PC crunching with a fast GPU than 2 PCs with 2 slower GPUs. | |

| ID: 18196 | Rating: 0 | rate:

| |

|

thx a lot, guys :-) that's what i wanted to hear - clear answer, yes or no. | |

| ID: 18197 | Rating: 0 | rate:

| |

if GTX460 gives less credits then my GTX275 on the same project - what the sense to buy it? :-) My question was to clarify that. The real answer is: it depends. If you have the card to run GPUGRID then your GTX 275 will do more work but also will use more electricity. If you're running Collatz the GTX 460 will be more than twice as fast as the GTX 275. The GTX 460 is also faster in DNETC and Folding... | |

| ID: 18199 | Rating: 0 | rate:

| |

i jusr read the topic quickly. correct me if i'm wrong, but if i've got OC'd GTX275 there is no sense to buy GTX460? I don't think a GTX-460 makes sense for you to run GPUGRID at this time. Expect both drivers and apps for the GF104 to improve with time, we are expecting a boost sometime after Summer break. As I understand it the GF104 is underutilized at this time, much like my ATI HD-5770 cards are when Folding. They work, but they don't work up to their full potential. This is all quite normal as Software has traditionally lagged behind hardware. That said I'm not sorry I purchased a GTX-460. The card is currently making decent credit and has the potential to do even better. I know it can be frustrating to have shiny new hardware that is not fully utilized but you can be assured that "they" will eventually work these things out. You might wait and see what becomes of the GTX-475 and see also what happens when the newer software becomes available. It is a good time to just wait a bit IMHO. | |

| ID: 18200 | Rating: 0 | rate:

| |

|

bigtuna, | |

| ID: 18203 | Rating: 0 | rate:

| |

Beyond, I agree for sure if you're only running Collatz, DNETC & RC5-72. The HD 5770 is as fast as the GTX 460 at Collatz and faster at the other two. In addition the HD 5770 is quite a bit less expensive. The advantage for the GTX 460 is that it can currently run GPUGRID and will hopefully run it well in the not too distant future. | |

| ID: 18204 | Rating: 0 | rate:

| |

|

pricewise GTX460 is pretty close to 5850, which will well outperform GTX460 in collatz, MW, etc. For MW i'm using 4870 and 4890, which gonna be replaced by 6970 this fall, but for GPUGRID i'm not sure what should I do. may be GTX475 is the answer... | |

| ID: 18205 | Rating: 0 | rate:

| |

Beyond, I've got twin HD-5770 cards in one system. Just for grins I ran the 5770 cards on Collatz for a day and they scream. They made more points in that one day than all my CPUs had made on Rosetta in months of crunching. I think the GTX-460 will be the one to have for GPUGRID. They already work pretty well considering how new they are. | |

| ID: 18209 | Rating: 0 | rate:

| |

|

I came across a few GTX460 2GB cards (Palit/Gainward, ECS, and Sparkle). Going by the specs, some offer a small advantage in clock speeds, but all are more expensive than 1GB cards. While these are not quite gimmick cards (they should be better for video editing and some games, especially 3D games and for supporting 3 monitors in 2-way SLI gaming) dont buy one just to crunch with here - you would be better of with a cheaper card and high clocks, such as this Galaxy. | |

| ID: 18213 | Rating: 0 | rate:

| |

|

I've gone through a bunch of results for my GTX460 and compared them to the GTX275 that was in the machine previously. | |

| ID: 18215 | Rating: 0 | rate:

| |

|

should i buy a 5850 or a gtx460 ? what would work best with gpugrid ? (and if you will say that i shouldn't buy the gtx460 use arguments) | |

| ID: 18216 | Rating: 0 | rate:

| |

should i buy a 5850 or a gtx460 ? what would work best with gpugrid ? (and if you will say that i shouldn't buy the gtx460 use arguments) GPUGrid does not have an app that will run on a 5850 so the 460 would be a better choice. ____________ Thanks - Steve | |

| ID: 18217 | Rating: 0 | rate:

| |

should i buy a 5850 or a gtx460 ? what would work best with gpugrid ? (and if you will say that i shouldn't buy the gtx460 use arguments) If UR into "spicemen", math, or cryptography go with Ati, if UR into medical research go with Nvidia. If UR not interested in DC or GPGPU at all, go with Ati. That's my POV... ____________  | |

| ID: 18218 | Rating: 0 | rate:

| |

|

If I were you I would sell that GTX 260-192, if you have not already done so. | |

| ID: 18219 | Rating: 0 | rate:

| |

|

ok.. thanks for the arguments everyone.. so i'll go with the gtx460 then.. now the problem is: should i wait 2 months to buy it cheaper or not? | |

| ID: 18221 | Rating: 0 | rate:

| |

|

If you decide to wait then I would ask what your price point is ... the amount they *might* drop is quite small overall ... if you really need to save $20 USD then I doubt you would be crunching to begin with ... start building up those GPUGrid points sooner rather than later :cheers: | |

| ID: 18222 | Rating: 0 | rate:

| |

|

i'm looking for a 25$ drop in price because it costs a bit too much for me.. | |

| ID: 18224 | Rating: 0 | rate:

| |

|

Perhaps the sale of your other cards would sufficiently offset the purchase of a GTX460? | |

| ID: 18225 | Rating: 0 | rate:

| |

Hi all, the price is going down and the time to make a decision is there. I've seen a lot of different cards, found infos about overclocking aso. Speaking about 1024MB, GTX460 are clocked from 675 up to 815 MHz. Price difference is not that much so it makes sense thinking about that. Some people posted, that overclocked cards are not stable, so these cards may be good for gaming but not for crunching. Could owners of GTX460 cards please post their experiences please? Kind regards, Alexander | |

| ID: 18413 | Rating: 0 | rate:

| |

|

I got the MSI N460GTX CYCLONE 1GD5/OC GeForce GTX 460 (Fermi) 1GB 256-bit GDDR5 PCI Express 2.0 x16 HDCP and am very happy with it. While on GPUGrid RAC was about 30k ppd. On F@H now and doing very well, looks like 9kppd or a little better. I have it OCed to 800/1600/1800 and with a 24c ambient runs at 50c with a 93% load on F@H WU. Fan set to auto is 61%. I cannot hear it and it is less than 3 feet from me in a case with an open mesh screen on the side. | |

| ID: 18431 | Rating: 0 | rate:

| |

Some people posted, that overclocked cards are not stable, so these cards may be good for gaming but not for crunching. A factory OC does not increase the card's cost much but gets you a proportionally higher RAC - so it's probably worth it. If such a card is not stable as-is, you can send it back (faulty product), although that should not normally be the case. This would be expensive for the manufacturer, so they test the chips before they decide whether they should get normal or increased clocks. So you should actually get a slightly better chip with a factory OC'ed card. A little more interesting is long term stability: all chips degrade after time, depending on (1) voltage (2) temperature and (3) frequency, in descending order of importance. The result is that after some running time the chip can only reach slightly lower clock speeds at the same voltage and temperature. And at some point this "clock speed potential" crosses the stock clock. That's where it gets unstable. And since most chips of a batch are rather similar, they all have approximately the same clock speed potential, with a slight advantage for the better chips choosen for factory OC'ed cards. Therefore these chips may fail their factory clock speed earlier than stock clocked ones (e.g. it may be able to go 30 MHz higher but is clocked 50 MHz higher, so the "degradation margin" is reduced by 20 MHz). In this sense factory OC'ed cards may be less stable. However, you could still downclock them after 2 or 3 years and in the end reach a longer lifespan due to the higher clock speed potential. MrS ____________ Scanning for our furry friends since Jan 2002 | |

| ID: 18436 | Rating: 0 | rate:

| |

|

THX, that helped! | |

| ID: 18466 | Rating: 0 | rate:

| |

Some people posted, that overclocked cards are not stable, so these cards may be good for gaming but not for crunching. Might I add the power consumption of the GTX460 is less than the GTX470. That some may argue that this in now ways mean that it's "green", so long as the WU's don't have a high rate of failure & the science is valid, that the work done on even the more power hungry GTX470 is much, much more than the "green" cards, CPU's, or anything else can do per watt consumed. That some "might" still use a GTX460 after 3 years is debatable. That some might complain about too much of the CPU being dedicated to the GPU is also IMO also debatable, since no matter if the WU takes 3-4 hours more or less, that having the PC running 24/7 with the GPU running all the time, sending WU's back 3-4 hours faster or slower still uses a heck of a lot of power. I'm testing Fedora 13 LXDE 64bit & it hogs the CPU much more than other Linux Distro's I've tried. It seams stable (I've only briefly had it running). So if it's good, I'm happy. The only thing I've noticed with Fedora 13 is that it's easier (for me) to manually upgrade/downgrade the Nvidia Driver & BOINC Client on Ubuntu/Mint than on Fedora, so I'm not sure if it's a good idea to use it with new GPU's with the constant need for the newest driver & BOINC Client. ____________  | |

| ID: 18467 | Rating: 0 | rate:

| |

|

I have seen which books an application for GTX 460 a complete core in the CPU in the task manager. Is this normal now? Before these were only 2-6% | |

| ID: 18621 | Rating: 0 | rate:

| |

|

leprechaun, on Linux it will automatically use a full CPU core/thread but not on Win7. Not quite sure what you are asking, so a few general things that might cover your concerns: | |

| ID: 18627 | Rating: 0 | rate:

| |

|

Thank you, I think it lies with the variable SWAN_SYNC=0. | |

| ID: 18629 | Rating: 0 | rate:

| |

|

Just crunched afew GPUGrid tasks with an GTX480. | |

| ID: 18634 | Rating: 0 | rate:

| |

|

Before you completely dismiss CPUs please consider that if we could not crunch with CPUs there is a whole world of research that would never get done because GPUs simply are not flexible enough. GPUs are great at parallel processing but taking a look at the number of CPU projects vs GPU projects is an indicator of what the current state of GPU crunching/ folding capabilities and popularity. I consider GPUGrid the only GPU project worth any attention (well maybe F@H is OK) but DRAM setting are more important Could not be further from the truth. DRAM timmimgs, bandwith, etc. make almost no difference in CPU / GPU grunching. The one project I know of where DRAM makes any difference at all is climateprediction. Other than that, for CPU crunching raw core GHz is king and for GPUs it is all about the shaders. ____________ Thanks - Steve | |

| ID: 18635 | Rating: 0 | rate:

| |

|

Hi, you are absolutely right, I should have mentioned, which Projects benefit | |

| ID: 18654 | Rating: 0 | rate:

| |

|

One can put it this way: if an app is very dependent on memory it's probably not programmed in a good way, as CPUs are built to avoid memory access. Such programs will likely benefit from basic code optimization, using different algorithms to solve the probleme etc. A program should normally mature past this point before it's deployed massively parallel via BOINC. | |

| ID: 18657 | Rating: 0 | rate:

| |

|

Hello! | |

| ID: 19284 | Rating: 0 | rate:

| |

|

gtx460, 35,820 secs (average) per wu (9.95 hours). My GTX295's are around 10 hours a wu | |

| ID: 19287 | Rating: 0 | rate:

| |

|

Hmm ok.. now i only have one WU to compare with and i had it stopped once.. | |

| ID: 19290 | Rating: 0 | rate:

| |

|

Lots of posts about this in the Forums and your observations tally well with others. | |

| ID: 19296 | Rating: 0 | rate:

| |

Personally I think this is a deliberate ploy to reduce RTM’s. I think you're reading too much into this. It's probably much more a matter of "can't get it to work properly" than "don't want it to work properly". Last quarter AMD had surpassed nVidia as the (non-Intel) GPU king and they've taken a lot of flak for the Fermi design. Plus the issue of non-existent GT200 mainstream chips for a very long time and the notebook chips recalls. They just can't afford to make their cards perform worse deliberately. MrS ____________ Scanning for our furry friends since Jan 2002 | |

| ID: 19298 | Rating: 0 | rate:

| |

Over the last week the GPUGrid techs started playing with different apps, so now if your GTX275 has a recent driver it will probably not be running the fast CUDA 2.2 app and will instead be using a CUDA 3.1 app, designed for Fermis and not G200 series cards. How individual cards performs on these is open to debate, but in particular the GT240s took a big hit in performance running the CUDA3.1 app, and the latest drivers resulted in many cards dropping their clocks. This begs the question, why do we keep switching to apps that are slower and don't work well for many cards? I now have had enough WUs to make similar WU comparisons between 6.05 and the new 6.12 on various cards. I'm seeing a 5% to occasionally as much as a 20%(rare) slowdown with 6.12. Is there any advantage to 6.12 that made them replace the faster 6.05? Why do we keep getting new apps that seem to be inadequately tested? Is there any reason not to return to 6.05? | |

| ID: 19300 | Rating: 0 | rate:

| |

|

From a crunchers point of view 6.05 was better. | |

| ID: 19301 | Rating: 0 | rate:

| |

From a crunchers point of view 6.05 was better. It would be a really good idea to communicate reasons for changes if there are reasons. From the posts it looks like the Linux crunchers are taking even a bigger hit than those of us running Windows. Lately though we've had to revert to older drivers to avoid 6.11 and get back to 6.05, then as soon as we do that we get the slower running 6.12. To get get rid of that it sounds like we're going to have to use an app_info.xml. Is it OK to do that? Who knows? What was the problem if any with 6.05? | |

| ID: 19302 | Rating: 0 | rate:

| |

|

As said in another post we are looking into it. | |

| ID: 19312 | Rating: 0 | rate:

| |

As said in another post we are looking into it. And in the meantime, should we waste our precious GPU time on the rubbish 6.12? It's absolutely uncrunchable on a Linux machine. If you don't put back the working version I will think about it as willful neglect. There is absolutely no reason to punish the Linux machine with that crap! It's not about 10% slower, it's about 1000% slower. That's plain ridiculous. ____________ Gruesse vom Saenger For questions about Boinc look in the BOINC-Wiki | |

| ID: 19313 | Rating: 0 | rate:

| |

|

The reason because on Linux is slower than before is that now it does not use SWAN_SYNC=0 by default. So now it uses less CPU. I don't think that it's useful but it was a requested more and more times. Just set | |

| ID: 19325 | Rating: 0 | rate:

| |

Just set As I've said in some other post: I'm no programmer, I'm a user. In what GUI do I do that? What's the .bashhrc? How will that influence other programs and projects? ____________ Gruesse vom Saenger For questions about Boinc look in the BOINC-Wiki | |

| ID: 19326 | Rating: 0 | rate:

| |

How will that influence other programs and projects? Oh the joys of linux. Sorry, can't tell you how to do it but it certainly doesn't influence other software. As long as noone else chooses to call his environment variable "SWAN_SYNC". MrS ____________ Scanning for our furry friends since Jan 2002 | |

| ID: 19327 | Rating: 0 | rate:

| |

|

I don’t presently have a Linux system to try this on, and I am no Linux expert either, but I guess you just have to open up a command terminal and type in, | |

| ID: 19328 | Rating: 0 | rate:

| |

|

you have to edit the file .bashrc and add the line export SWAN_SYNC=0. | |

| ID: 19367 | Rating: 0 | rate:

| |

you have to edit the file .bashrc and add the line export SWAN_SYNC=0. Where is this file? Edith says: I found 4 different ones in different places, 2 dot.bashrc, 2 bash.bashrc ____________ Gruesse vom Saenger For questions about Boinc look in the BOINC-Wiki | |

| ID: 19373 | Rating: 0 | rate:

| |

|

The file it's in your home directory, but as it starts with a dot, it's hidden. | |

| ID: 19378 | Rating: 0 | rate:

| |

The file it's in your home directory, but as it starts with a dot, it's hidden. There ain't anything like that. And of course I've set the Nautilus to "Show Hidden Files". The only file with "bash" in it is .bash_history. ____________ Gruesse vom Saenger For questions about Boinc look in the BOINC-Wiki | |

| ID: 19380 | Rating: 0 | rate:

| |

|

Just create it then. | |

| ID: 19385 | Rating: 0 | rate:

| |

Just create it then. What's it supposed to do? Why should I create a hidden file in my main folder outside BOINC for your project? Why don't you include it in your program? What will other applications outside BOINC do with this? ____________ Gruesse vom Saenger For questions about Boinc look in the BOINC-Wiki | |

| ID: 19387 | Rating: 0 | rate:

| |

|

Don't do anything then. There will another way of doing sometime soon within your BOINC dir. Just create it then. | |

| ID: 19390 | Rating: 0 | rate:

| |

Just create it then. When an interactive shell that is not a login shell is started, bash reads and executes commands from /etc/bash.bashrc and ~/.bashrc, if these files exist. Mine looks like this # This file is sourced by all *interactive* bash shells on startup, # including some apparently interactive shells such as scp and rcp # that can't tolerate any output. So make sure this doesn't display # anything or bad things will happen ! # Test for an interactive shell. There is no need to set anything # past this point for scp and rcp, and it's important to refrain from # outputting anything in those cases. if [[ $- != *i* ]] ; then # Shell is non-interactive. Be done now! return fi # Bash won't get SIGWINCH if another process is in the foreground. # Enable checkwinsize so that bash will check the terminal size when # it regains control. #65623 # http://cnswww.cns.cwru.edu/~chet/bash/FAQ (E11) shopt -s checkwinsize # Enable history appending instead of overwriting. #139609 shopt -s histappend # Change the window title of X terminals case ${TERM} in xterm*|rxvt*|Eterm|aterm|kterm|gnome*|interix) PROMPT_COMMAND='echo -ne "\033]0;${USER}@${HOSTNAME%%.*}:${PWD/$HOME/~}\007"' ;; screen) PROMPT_COMMAND='echo -ne "\033_${USER}@${HOSTNAME%%.*}:${PWD/$HOME/~}\033\\"' ;; esac use_color=false # Set colorful PS1 only on colorful terminals. # dircolors --print-database uses its own built-in database # instead of using /etc/DIR_COLORS. Try to use the external file # first to take advantage of user additions. Use internal bash # globbing instead of external grep binary. safe_term=${TERM//[^[:alnum:]]/?} # sanitize TERM match_lhs="" [[ -f ~/.dir_colors ]] && match_lhs="${match_lhs}$(<~/.dir_colors)" [[ -f /etc/DIR_COLORS ]] && match_lhs="${match_lhs}$(</etc/DIR_COLORS)" [[ -z ${match_lhs} ]] \ && type -P dircolors >/dev/null \ && match_lhs=$(dircolors --print-database) [[ $'\n'${match_lhs} == *$'\n'"TERM "${safe_term}* ]] && use_color=true if ${use_color} ; then # Enable colors for ls, etc. Prefer ~/.dir_colors #64489 if type -P dircolors >/dev/null ; then if [[ -f ~/.dir_colors ]] ; then eval $(dircolors -b ~/.dir_colors) elif [[ -f /etc/DIR_COLORS ]] ; then eval $(dircolors -b /etc/DIR_COLORS) fi fi if [[ ${EUID} == 0 ]] ; then PS1='\[\033[01;31m\]\h\[\033[01;34m\] \W \$\[\033[00m\] ' else PS1='\[\033[01;32m\]\u@\h\[\033[01;34m\] \w \$\[\033[00m\] ' fi alias ls='ls --color=auto' alias grep='grep --colour=auto' else if [[ ${EUID} == 0 ]] ; then # show root@ when we don't have colors PS1='\u@\h \W \$ ' else PS1='\u@\h \w \$ ' fi fi # Try to keep environment pollution down, EPA loves us. unset use_color safe_term match_lhs ____________  | |

| ID: 19401 | Rating: 0 | rate:

| |

|

Re: Swan_Sync/SWAN_SYNC=0 | |

| ID: 20883 | Rating: 0 | rate:

| |

|

Did GPUGrid ever get the 460 running better/faster? | |

| ID: 20884 | Rating: 0 | rate:

| |

|

No | |

| ID: 20886 | Rating: 0 | rate:

| |

|

I just spent an hour going through this thread, from first to last. I gave up half way, bemused by all the technicalities. Why would I do that? | |

| ID: 21719 | Rating: 0 | rate:

| |

Can you please tell me if the GTX 460 can now perform to its maximum capability on GPUGRID, ... ? GPUGRID can still use only the 2/3 of the shaders of any CC2.1 cards (GTX 460, GTX 560 etc). It is not clear, if GPUGRID could ever use all of the shaders of CC2.1 cards. ..., or should I consider switching to folding@home? Should I answer this one too? | |

| ID: 21720 | Rating: 0 | rate:

| |

I just spent an hour going through this thread, from first to last. I gave up half way, bemused by all the technicalities. Why would I do that? Take a look at my machines and that should answer your question. Both GTX460's Oc'd GPU 850 Shaders 1700 Memory 2025 Voltage Stock Max Heat 68c ____________ Radio Caroline, the world's most famous offshore pirate radio station. Great music since April 1964. Support Radio Caroline Team - Radio Caroline | |

| ID: 21721 | Rating: 0 | rate:

| |

|

A GTX460 is far from being the best card, but it is still a good card and can certainly contribute; 70 to 80K per day, and no trouble finishing any of the tasks in time. | |

| ID: 21722 | Rating: 0 | rate:

| |

Can you please tell me if the GTX 460 can now perform to its maximum capability on GPUGRID, or should I consider switching to folding@home? Thanks for the response. I did take a look at your machines and I note you're processing the big WUs anywhere between 11 and 20 hours. But I don't see how that answers my question about shader use... Also, "GPU 850 Shaders 1700 Memory 2025" - does that equate with what I see on the Nvidia site: Graphics Clock 675, Processor Clock 1350 and Memory Clock 1800? What's "Voltage Stock" mean? Sorry if I'm being thick! | |

| ID: 21723 | Rating: 0 | rate:

| |

|

The Shaders (CUDA Cores) are fixed relative to the core clock. | |

| ID: 21724 | Rating: 0 | rate:

| |

The Shaders (CUDA Cores) are fixed relative to the core clock. Ah!! So by overclocking he has allowed more of the shaders to become active for GPUGRID. Right? Or not??? No - that can't be right. Nvidia claims 336 shaders on the reference rates. This is all very complicated!! | |

| ID: 21725 | Rating: 0 | rate:

| |

|

Overclocking increases the processing frequency, so it just makes the existing usable shaders, and core, faster. | |

| ID: 21726 | Rating: 0 | rate:

| |

NB. this OC is quite high and a stable 10% OC is more commonly the limit. That might well have been the case SK but not here as I hate to pay the extra to have the BIOS altered for a factory OC. The clocks I have achieved are from stock and in most cases are stable. I only run into trouble when I play 3d poker at the same time. :) but if I have the poker in low detail it works fine. The cards are PNY and MSI If anyone has 460's running at stock I would like to compare times. ____________ Radio Caroline, the world's most famous offshore pirate radio station. Great music since April 1964. Support Radio Caroline Team - Radio Caroline | |

| ID: 21727 | Rating: 0 | rate:

| |

|

I looked into this some time ago and found that return times fell fairly linearly with the rise in shader clock speed, but it would be good to see if that still holds true, and for different tasks. | |

| ID: 21729 | Rating: 0 | rate:

| |

|

You can compare them with mine Betting Slip. I have two stock GTX460 (961MB) GPUs. From what I could see yours are aprox. 25% faster. | |

| ID: 21732 | Rating: 0 | rate:

| |

|

Thanks Aon, 25% looks about the case. Please tell me, have you left 2 cores free for your cards? | |

| ID: 21734 | Rating: 0 | rate:

| |

|

No, they are all crunching Rosetta. Each GPU wu uses 0.08% of a CPU core. | |

| ID: 21735 | Rating: 0 | rate:

| |

|

That will probably add an hour on your GPUG tasks because not only will they have to fight for CPU time they will also have to contend with I/O congestion due the virtual cores. | |

| ID: 21736 | Rating: 0 | rate:

| |

|

I thought the tasks take the amount of cpu necessary to run by default. Are you saying that it is possible to use a entire core for one task? If that is the case what do I have to do? The "KKFREE" tasks finish in about 24 hours + the time it takes for uploading so I always miss the 50% bonus with these. | |

| ID: 21737 | Rating: 0 | rate:

| |

|

FAQ - Best configurations for GPUGRID

<options> <report_results_immediately>1</report_results_immediately> </options> </cc_config> It would be interesting to measure how much gain there still is when using swan_sync and freeing up a CPU core. For sure it's less that what it use to be, but that's just because the tasks are more efficient when its not in use. It is probably around 10% now and might vary with different task types. | |

| ID: 21738 | Rating: 0 | rate:

| |

|

Thanks skgiven. I will try report_results_immediately. Swan_sync sounds complicated to me. | |

| ID: 21739 | Rating: 0 | rate:

| |

|

SWAN_SYNC is fairly easy to setup and use on Windows.

Click Advanced System Settings (left side), then Environmental Variables. Under System Variables, Click New, For the Variable name type SWAN_SYNC For the Variable Value type 0 To report tasks immediately on Vista or win 7:

<options> <report_results_immediately>1</report_results_immediately> </options> </cc_config> Then 'Save As' and type the .xml file extension, cc_config.xml (you will have to allow notepad to save all file types in the save as window). | |

| ID: 21740 | Rating: 0 | rate:

| |

Take a look at my machines and that should answer your question. OK. I had a brief flirtation with folding@home - too complicated, so here I am again. My Asus GTX 460 is up and running, crunching GPUGRID WUs. Also active is Asus's SmartDoctor. With SmartDoctor I set "Engine" to 850, your 'GPU 850'(?). Temperature is at 65C. With SmartDoctor I can also set "Vcore" and "Memory". Vcore ranges from 1 to 1.087. Memory ranges from (DDR) 3400 to (DDR) 3800. How do these setting relate to your "Shaders" and "Memory"? Perhaps I need a different control application? Thanks, Tom | |

| ID: 21742 | Rating: 0 | rate:

| |

|

Yes, GPU 850 and that should take your shaders to 1700. Memory should be 2025 | |

| ID: 21744 | Rating: 0 | rate:

| |

Try EVGA Precision. Google it. That's it! Core 855, Shader 1710, Memory 2027, Temperature 55C. Thank you!! Tom | |

| ID: 21745 | Rating: 0 | rate:

| |

Try EVGA Precision. Google it. Oops:  What I set on the right is not reflected on the left. And I did click "apply". What have I done wrong?? Thanks, Tom | |

| ID: 21748 | Rating: 0 | rate:

| |

|

Click "Apply" and check "Apply at windows startup" and reboot. | |

| ID: 21749 | Rating: 0 | rate:

| |

|

That looks like the clock speeds were throttled by the NVidia drivers. | |

| ID: 21750 | Rating: 0 | rate:

| |

Click "Apply" and check "Apply at windows startup" and reboot. That did it. Thank you! Tom | |

| ID: 21758 | Rating: 0 | rate:

| |

|

I think I'm finally getting the hang of getting the best out of my GTX 460. I only run the long WUs, in about 18.5 hours. After the current WU is finished I'm ready to reboot with SWAN_SYNC 0, and report results immediately. I've already freed up a core for the GPU. | |

| ID: 21778 | Rating: 0 | rate:

| |

Is there a way to persuade BOINC to download, say, one hour before the active WU finishes? If you have 24/7 connectivity then in your preferences set "Connect about every..." to 0 and "Additional work buffer" to 0.1. That's what I have and I get a new task about an hour before the running task finishes. | |

| ID: 21779 | Rating: 0 | rate:

| |

|

Hi | |

| ID: 21780 | Rating: 0 | rate:

| |

Is there a way to persuade BOINC to download, say, one hour before the active WU finishes? Great! Thank you!! Did that... | |

| ID: 21782 | Rating: 0 | rate:

| |

Boinc manager (advanced view)/advanced/preferences/network usage/... I'm running BOINC 6.12.33 and the preferences are under the tools tab... | |

| ID: 21783 | Rating: 0 | rate:

| |

Is there a way to persuade BOINC to download, say, one hour before the active WU finishes? Hi All this is fine but most effective and can control when you want to download the tasks is the following. In the Projects tab select "Do not download new tasks" and when we want to get more work simply select "Allow new tasks". To optimize the working time it is best to wait to ask the previous tasks have been sent and if we are with long run times in danger of losing the bonds. In addition to controlling the discharge of the tasks we avoid the BOINC client is asking the server to ask for this task and respond continuously to not send anything. Greetings. | |

| ID: 21784 | Rating: 0 | rate:

| |

Is there a way to persuade BOINC to download, say, one hour before the active WU finishes? Just set connect to 0 amd additional to 0 and it wont download new until last one is finished ____________ Radio Caroline, the world's most famous offshore pirate radio station. Great music since April 1964. Support Radio Caroline Team - Radio Caroline | |

| ID: 21794 | Rating: 0 | rate:

| |

Can you please tell me if the GTX 460 can now perform to its maximum capability on GPUGRID, ... ? Has this problem been fixed in the new version? | |

| ID: 21840 | Rating: 0 | rate:

| |

|

Unfortunately no. | |

| ID: 21844 | Rating: 0 | rate:

| |

|

Thanks for the reply. It seems like such an easy fix. Test for compute capability 2.1, then use 48 shaders per SM instead of 32 for the compute 2.1 cards. Kind of makes one wonder. Am I missing something? | |

| ID: 21846 | Rating: 0 | rate:

| |

|

Well - after a month+ of crunching GPUGRID on my Asus GTX 460 I have to say I'm very happy with its performance. | |

| ID: 21880 | Rating: 0 | rate:

| |

|

acemdlong_6.15_windows_intelx86__cuda31 | |

| ID: 22132 | Rating: 0 | rate:

| |

acemdlong_6.15_windows_intelx86__cuda31 Please give us details of the crash. If you get a blue screen tell us the stop code. ____________ | |

| ID: 22143 | Rating: 0 | rate:

| |

|

Currently running a GTS450 on one system and all being well will be adding a GTX460 on my other system. I'm not a hardened cruncher but GPUGRID seems to be a good second project to run along side Docking. | |

| ID: 22145 | Rating: 0 | rate:

| |

|

Hi Rantanplan, the error you had was after 35sec on a bad task; the same task failed on other GPU's as well. | |

| ID: 22147 | Rating: 0 | rate:

| |

TheFiend, while the GTX460 is a reasonable GPU, the higher end CC2.0 GPU's are better value (for crunching here) overall. That said, adding a GTS450 to a system with an average PSU might well be your best option; the GTS450 requires one 6pin power connector. Docking is my main project, GPUGRID is just a side project, and I have only just started GPU crunching after cutting down on the number of crunchers I run. Value wise, for me, a GTX460 fits the bill perfectly - the proceeds of selling a CPU/mobo/RAM bundle will pay for it. | |

| ID: 22151 | Rating: 0 | rate:

| |

|

Decided to get a EVGA GTX550TI instead a 460. | |

| ID: 22192 | Rating: 0 | rate:

| |

|

This card work well with ACEMD standard WU | |

| ID: 22273 | Rating: 0 | rate:

| |

This card works well with ACEMD standard WU My ASUS GTX 460 runs "ACEMD for long runs" exclusively, 24/7, and they complete anywhere between 10.5 and 16.5 hours, giving me the 50% bonus every time. Try it!! ____________ | |

| ID: 22362 | Rating: 0 | rate:

| |

Message boards : Graphics cards (GPUs) : GTX 460